使用numpy库完成Polynomial相关代码。

Requirement

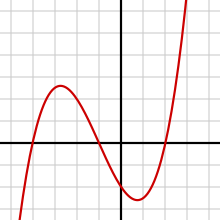

One way of looking at the dimensionality of an object is to examine how the “amount inside” scales with the linear dimension of the object. For example, we know the area of a circle is given by A = πr^2 . As shown in Fig. 1, one could count the squares inside a set of circles and plot the number vs r. Then by fitting a function A(r) = ar^d to the data points you could determine an approximate formula for the area, and the exponent d gives the dimensionality of the circle. It should come out very close to 2, but of course there will be some error from counting or not counting squares that don’t quite fit the circle.

In homework 9 you did a polynomial fit using numpy.polyfit() but here you need to determine the power and therefore can’t fit a polynomial which has fixed integer powers.

Instead you can use scipy.optimize.curve_fit(), which is documented online. There are of course other possible methods, which you are free to try.

Now consider the diffusion limited aggregation (DLA) simulations we have worked with. We can apply the same area law method outlined above to a DLA cluster, counting the number of stuck particles inside a given radius. You can see from Fig. 2 that the voids in the cluster mean the number of particles will grow more slowly than the number of grid sites shown in Fig. 2. Fitting a function N(r) = ar^d to the number of particles vs r, the number d is called the fractal dimension of the cluster. Since the cluster has a finite size, one needs to be careful to use circles that are small enough that they don’t simply include a lot of empty space beyond the cluster dimensions.

Your assignment is to write a program to compute the fractal dimension of DLA clusters in 2 dimensions. There is an updated DLA python program on icon that has been sped up with numba and has some improvements in how the new particles are started off: they start off closer to the cluster and are distributed uniformly around the center. With these improvements, you shouldn’t have to wait too long to generate a nice big cluster.

Rather than writing a single program, you will probably want to write multiple programs that save results in files that are read by subsequent programs. The basic procedure is

- (1) Generate a cluster and count the number of particles within different radii.

- (2) You will need to play with the cluster size and also chose a maximum radius that isn’t too big.

- (3) Use scipy.optimize.curve fit() (or something else) to fit a function N (r) = ard to your data.

- (4) Repeat the above. Each time you will get a slightly different value of the fractal dimension d. Accumulate these measurements using the Datum class to determine the mean and standard deviation of d.

- (5) As a bonus, you can see how the size of the clusters affects the error in the final result.

Articles with more information on diffusion limited aggregation and fractal dimensions can be found on wikipedia.